Design - Read-Only SaaS Rationalization Dashboard

Design a Read-Only SaaS Rationalization Dashboard in ChatGPT with Torii MCP

Purpose

This guide shows how to turn a working Torii web MCP connection in ChatGPT into a focused, read-only dashboard experience for software usage, license consumption, spend, and renewal analysis.

What this type dashboard is for

This dashboard pattern is designed to help teams answer questions like:

- Which apps are heavily used by a specific department or business unit

- Where license concentration is highest

- Where spend is concentrated

- Which organizational areas have overlapping applications

- Which contracts or renewals deserve review

- Which apps belong in a human review queue for rationalization

This is not a general-purpose “do anything in Torii” assistant. It is a read-only dashboard assistant.

Why Torii MCP fits this use case

Torii’s MCP server lets AI assistants securely query and act on Torii data including apps, users, contracts, workflows, and more. Assistants can search and read apps, users, and contracts, list workflows and audit-style data, and run tools such as match_apps and search_apps.

This makes Torii web MCP a strong fit for dashboards because it gives ChatGPT direct access to the Torii data needed for natural-language drilldowns.

Read-only operating model

Torii’s MCP article is explicit that connected assistants can take actions you grant them, such as updating a user or creating a contract. For this dashboard design, that is intentionally out of scope.

Allowed behavior

Use the dashboard assistant to:

- Read apps, users, contracts, workflows, and related metadata where available

- Summarize software usage and coverage patterns

- Group and compare apps across organizational slices

- Identify overlap and rationalization candidates

- Summarize contract and renewal exposure

- Generate decision queues for human review

- Explain why an app or grouping was flagged

Not allowed

Do not use this dashboard assistant to:

- Update users

- Create contracts

- Modify application ownership

- Trigger workflows

- Remove or assign licenses

- Write back to Torii records

Why read-only first

A read-only design is the safest first deployment because:

- It is easier to approve internally

- It is easier to explain to stakeholders

- It keeps the assistant focused on discovery and analysis

- It avoids accidental expansion from “dashboard” into “automation”

The normalized dashboard model

Use the same vocabulary consistently across prompts, outputs, and any later packaging.

Functional Domain

A broad software category.

Examples:

- Collaboration

- Project Management

- CRM

- Security

- Design

Functional Capability

The more specific business capability an app supports.

Examples:

- Team chat

- Video conferencing

- Contract lifecycle

- Password management

- Ticketing

Functional Capability Path

A hierarchy that combines domain and capability.

Examples:

- Collaboration > Team Chat

- Security > Password Management

- IT Operations > Ticketing

This helps users compare apps that may have similar business functions even if their names or vendors differ.

Organizational View

The business dimension used for dashboard drill-downs.

Examples:

- Department

- Business Unit

- Legal Entity

- Region

- Cost Center

- a customer-defined grouping field

Do not hardcode Department

Department is a common choice, but it should not be the only supported slice.

Your dashboard should treat Organizational View as a configurable concept. That keeps the experience portable across tenants with different operating models.

Step 1: Choose the first Organizational View

Start with one primary drilldown field.

Good first choices usually have:

- Broad population coverage

- Stable business meaning

- Low null rates

- Clear stakeholder ownership

- Low duplication or ambiguity

Common candidates include:

- Department

- Business Unit

- Legal Entity

- Region

- Cost Center

Step 2: Validate the chosen slice

Before you standardize on a field, verify that it is good enough for dashboard use.

Check for:

- Missing values

- Inconsistent naming

- Too many one-off labels

- Merged concepts in a single field

- Values that are too technical for business readers

Example of a good slice

A field that cleanly separates Sales, Marketing, Finance, and Engineering.

Example of a weak slice

A field that mixes human-readable names, IDs, old labels, and partial nulls in the same column.

Step 3: Save an approved Organizational View mapping

Once you choose a primary slice, document it for reuse.

Recommended fields to save in your mapping:

- Source field name

- Display label

- Allowed values or normalization rules

- Null handling rule

- Fallback rule

- Owner of the mapping

- Last reviewed date

Step 4: Use MCP-assisted discovery carefully

The Torii MCP lets ChatGPT query Torii data and use MCP-exposed tools. It does not publish a full tool inventory for every field-inspection scenario.

Because of that, use a careful discovery workflow:

- Ask ChatGPT to inspect available Torii-side attributes relevant to apps, users, contracts, and usage context

- Identify candidate fields that can serve as the Organizational View

- Compare their business usefulness

- Approve one primary slice

- Reuse that slice consistently

If you later need a more explicit field inventory or exact endpoint behaviour, verify that separately before publishing or standardizing it.

Dashboard sections

The starting point for a strong read-only dashboard usually includes six core sections.

1. Portfolio overview

Business question

What does the software portfolio look like at a glance?

Typical outputs

- Total apps under review

- Key functional domains

- Top concentration areas

- High-level overlap signals

- Top review candidates

Why it matters

This gives stakeholders a quick orientation before they drill down.

2. Usage by Organizational View

Business question

How is software usage distributed across the selected business slice?

Typical outputs

- Apps with the highest usage in each Department or Business Unit

- Concentration by team, entity, or region

- Areas where usage is broad versus narrow

Why it matters

This helps distinguish enterprise-wide platforms from localized tools.

3. License distribution by Organizational View

Business question

Where are licenses concentrated, and how unevenly are they distributed?

Typical outputs

- License counts by Organizational View

- Apps with high license concentration in a small number of groups

- Apps with wide distribution but low engagement, if usage context is available

Why it matters

This supports rationalization and license review conversations.

4. Spend concentration by Organizational View

Business question

Which organizational slices account for the most software spend?

Typical outputs

- Spend by Department, Business Unit, Legal Entity, or Cost Center

- Apps driving the largest commercial footprint in each slice

- Concentration of spend across overlapping capabilities

Why it matters

This helps finance and IT focus on the biggest opportunities first.

5. Renewal and contract exposure

Business question

Which contracts deserve near-term review, and where is the organizational impact highest?

Typical outputs

- Contracts renewing in the next 30, 60, or 90 days

- Renewal exposure by Organizational View

- High-cost or high-overlap renewals that should be reviewed manually

Why it matters

This creates a time-bound queue instead of a static dashboard.

6. Functional overlap candidates

Business question

Which apps appear to serve similar business functions and may deserve consolidation review?

Typical outputs

- App groups sharing the same Functional Capability Path

- Slices where multiple comparable tools are used

- Candidates with concentrated spend or limited distribution

- Apps that appear duplicative in a specific organizational area

Why it matters

This turns the dashboard into a rationalization tool instead of a reporting surface.

Decision queue design

The dashboard should end with a decision queue rather than a forced recommendation.

Each queue item should include:

- App name

- Functional capability

- Organizational slice affected

- Why it was flagged

- Data confidence

- Suggested next review step

Example queue labels

- Review for overlap

- Review for renewal timing

- Review for concentrated spend

- Review for limited usage footprint

- Review for license mismatch

- Insufficient data, verify manually

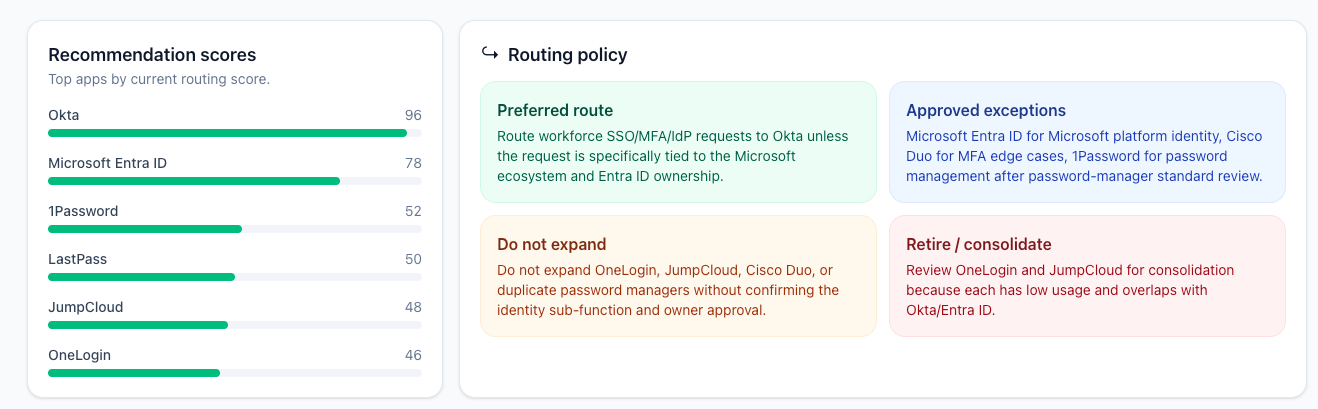

Recommendation model

Recommendations should be explainable and advisory.

Use factors like:

- Overlap strength

- Usage concentration

- License concentration

- Spend significance

- Renewal timing

- Data confidence

Example scoring language

- High review priority: strong overlap, meaningful spend, near-term renewal

- Medium review priority: moderate overlap or concentration, but incomplete commercial context

- Low review priority: weak overlap signal or limited supporting evidence

Do not present recommendations as final actions

The assistant should say things like:

This app is a strong review candidateThis appears to overlap with other tools in the same capability areaThis renewal deserves manual review

It should not say:

Remove this appCancel this contractReassign these usersRun this workflow

Confidence and data-quality handling

A strong dashboard should admit uncertainty.

Common issues to handle

- Missing Organizational View values

- Renamed fields

- Inconsistent labels

- Partial usage coverage

- Incomplete spend or contract linkage

- App classification ambiguity

Recommended behavior

If a section depends on a field that is missing or no longer stable:

- Pause that section

- Explain what is missing

- Ask for a replacement mapping or manual review

- Avoid silently guessing

Good example

The Legal Entity drilldown is incomplete because the mapped field is missing for part of the population. The renewal section below excludes records without a mapped Legal Entity.

Bad example

Inventing a grouping or silently collapsing unknown values into a confident summary.

Prompt patterns for business users

Use prompts that are simple, direct, and tied to the approved Organizational View.

Portfolio prompts

Give me a portfolio summary by Business Unit.Show the top software categories by Legal Entity.

Usage prompts

Show software usage by Department.Which apps are most concentrated in a single Region?

License prompts

Show license distribution by Cost Center.Which collaboration tools have the widest license footprint by Business Unit?

Spend prompts

Summarize software spend by Legal Entity.Which apps have the highest spend concentration in one Department?

Renewal prompts

Show renewal exposure in the next 60 days by Cost Center.Which Legal Entities are most affected by upcoming renewals?

Rationalization prompts

Which apps look functionally overlapping in Finance?Show overlap candidates by Business Unit for collaboration tools.Why was this app flagged as a rationalization candidate?

Explainability requirements

Every important dashboard finding should be explainable.

For each recommendation or flag, the assistant should be able to answer:

- What data it looked at

- Which Organizational View was applied

- Which factors increased the review priority

- Whether the conclusion is high or low confidence

- What the next human step should be

Suggested output pattern

A clean response format usually works best:

- Summary

- Key findings

- Breakdown by Organizational View

- Review candidates

- Confidence notes

- Suggested next human reviews

When plain ChatGPT is enough

Plain ChatGPT plus Torii MCP is enough when you need:

- A fast deployment path

- Interactive natural-language analysis

- Low setup overhead

- A human-in-the-loop dashboard experience

When to consider a wrapper later

Consider a Custom GPT or Agent wrapper only if you need:

- Fixed instructions and stricter behavior

- A branded internal experience

- Reusable prompt flows

- Scheduled refreshes or digest-style outputs

Final checklist

Before you roll this out, confirm that:

- ChatGPT is connected to Torii through MCP

- The dashboard is explicitly read-only

- One Organizational View is approved

- Dashboard sections are defined clearly

- Recommendation language is advisory, not automated

- Field drift or missing data is handled transparently

- Business users have prompt examples they can reuse

Next step

After this guide, the next logical step is to package the experience, there are three options:

- Plain ChatGPT plus MCP.

- Custom GPT wrapper.

- Agent wrapper for scheduled refreshes of the data.

Updated 20 days ago